Background

The Carina project was motivated by the need to speed up the deployment of services onto the OpenNebula private cloud at RIM. Different teams are in the process of on-boarding their services onto our OpenNebula-based cloud. Typically these services are composed of multiple scale-out clusters that are networked together. We expect that once moved onto the cloud, these services will want to operate in an elastic and highly available manner in order to take advantage of the flexibility to rapidly provision new machines.

OpenNebula is an excellent tool for creating individual VMs and facilitates supports the easy scripting of more complex VM configurations. Rather than have each of our services, re-invent the wheel, the Carina project was an attempt to standardize the process for automating multi-VM deployments and setting auto-scaling and availability management policies in the cloud. We looked at other projects like Claudia and OneService and took some of their ideas and extended it with our own light weight implementation.

Requirements

The following are some of the requirements that motivated the design and development of Carina. This is evolving as we have attempted to follow an agile process of iterating on feedback from our stakeholders.

- OS Image Library and LifeCycle Management: Support standard gold images for various OSes, and appliances for firewalls, load-balancers, etc.

- Middleware Independence: Should support any type of application or PaaS offering.

- Multi-VM Cluster Deployment: The system should support “one-click” deployment of a collection of interconnected VMs with load-balancers and firewalls configured.

- Composite Services and Service Interdependencies: Support interconnected networks of clusters which have dependencies on each other.

- Application Service Contextualization Framework: Facilitate automation and configuration of VMs in cluster environments by specifying OpenNebula contextualization scripts and variables, and automatically applying them to images.

- Monitoring usage of VM collections: Be able to collect and aggregate OS or app-specific metrics across a cluster.

- Policy-based Elasticity Based on SLAs: Drive elastic scaling of clusters based on workload or events.

- Service Prioritization: Ability to reflect the priority of services in handling resource contention when scaling up clusters.

- Deployment across multiple Datacenters: Support deployment and handling of failover of services across multiple datacenters.

- REST Web Services APIs: The system should provide REST APIs to facilitate integration with other tools.

Concepts

Carina introduces a few additional concepts that are reflected in the APIs and CLI as well as the system components. These include:

Environment: An environment refers to a collection of VMs in a master-slave configuration . The environment configuration defines the parameters used in creating and managing the environment (e.g context scripts, context variables, policies). The system will launch an environment from its configuration interacting with OpenNebula to create the VMs and then manage that environment according to the policies specified in the configuration.

Jobs: Each operation to manage an environment (create, scaleup, scaledown, remove) results in a job being scheduled and run.

Services: Services refer to the consumers of resources within the cloud. Each service can have its own environment configurations and create environments and control them independently of other services. Services are associated with priorities so that the system can arbitrate between them.

Pools: Resource pools refers to various clusters or virtual data centers in OpenNebula that can be targets for creating an environment. An endpoint reflects a services access to a pool and is referenced in the environment configuration.

System Architecture

The Carina components are designed to run on a management VM which can be loaded up in an OpenNebula environment and accessed remotely through either REST APIs or through a simple CLI that wraps the REST API calls. The client will typically run on the oZones server or the OpenNebula master. In the RIM environment we create an OS account on the oZones server for each service that will deploy VMs on the cloud. Typically operations personnel or developers for that service will log into that account to run Carina CLI (oneenv).

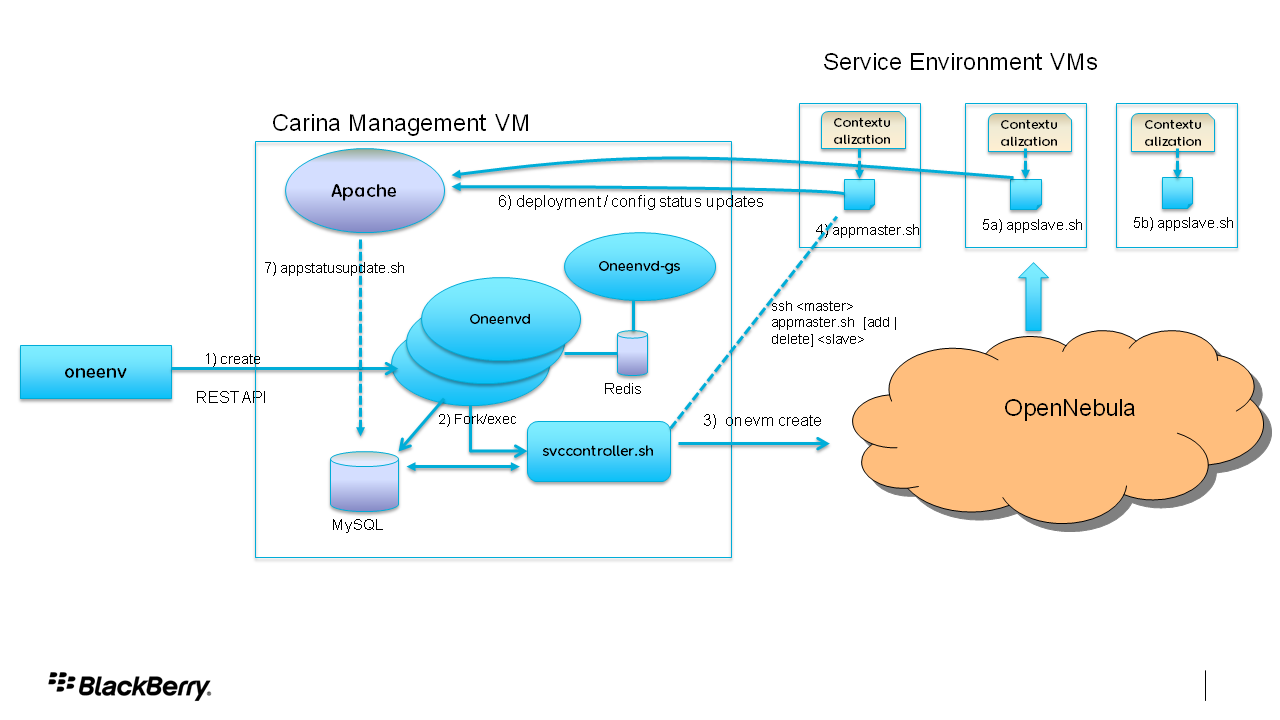

The components running in the management VM include the following:

The Service Controller (svccontroller.sh): This handles the deployment of individual clusters in a master-slave configuration. It reads a set of environment variables reflecting parameters of the environment configuration and calls OpenNebula commands to launch VMs in sequence and updates the MySQL database.

The Environment Manager (oneenvd) : This a per-service daemon that runs to receive requests to run operations and manages the resulting jobs. It also executes the policy manager which defines the rules for auto-scaling environments.

The Scheduler (oneenvd-gs): While individual services manage their own environments through their own instance of ‘oneenvd’, the scheduler will arbitrate requests when multiple competing services want to access the same resource pools based on their service class. For example it will scale down environments from lower class services to scale up higher class environments.

Apache Server: This host s a couple of simple CGI scripts which are called from the contextualization scripts to update the status of the application deployment process in the MySQL database.

MySQL DB: This holds a few tables reflecting various system objects like environments, jobs, VMs updated by either the CGI scripts or oneenvd.

Redis: A Redis server is used to facilitate the communication between the Scheduler and the per-service Environment Managers. The Scheduler also stores some state about various allocation requests that come into Redis.

The following diagram illustrates how these components interact:

Using Carina

Services using Carina define the configuration of their environments using a config.rb file that contains a set of Ruby hashes. The hashes define a hierarchical collection of name-value pairs that are processed by the Environment Manager(oneenvd). Because the config.rb file is just Ruby code, it can be dynamically loaded by the oneenvd whenever any changes to it are uploaded without having to restart the oneenvd. More comprehensive usage documentation can be found here

Future Directions

Carina is a relatively new project and we anticipate that in the short term we will be focusing on fixing bugs and issues that arise during deployment. While not cast in stone ,the following are some of the items we hope to address in the coming months:

- Reporting tools

- High availability of Carina Management VM

- Documentation of REST APIs and metric Plugin Interfaces

- Authorization & Authentication framework for REST APIs

- Simplified setup for new services, debugging and troubleshooting tools

- Storage provisioning integration: Creating and deleting storage volumes

-

Extend capabilities to public cloud

Of course, now that Carina is available to the community here , we are more than happy to have the input and participation of others who may be interested in helping us evolve this.

Addendum: There is a new appliance in the OpenNebula marketplace to simplify the installation of the software. More details in the Carina’s installation guide.

Interesting post and thanks to RIM for submitting it to the community. What I don’t completely grasp without trying it out yet, is what kind of overlap (or no overlap) does this have with tools like Puppet? I’m right in the middle of structuring our system deployment architecture and was using very basic contextualization variables with ONE and then feeding them to puppet. What else for me, will this add?

Thanks for the comment. I see Carina as mostly complementary to tools like Puppet and Chef. Puppet could be used in the context scripts to trigger deployment of applications inside VMs and also do things like updating configuration, patch match management etc. I don’t believe Puppet has the capability to auto-scale multi-VM environments based on policies like time of day, load conditions, application performance or prioritization rules. That’s not to say someone couldn’t build such a tool on top of Puppet. We’d be interested in hearing experiences if you have.