Do you operate a small Cloud infrastructure and need to optimise the centre occupancy? Then FaSS, a Fair Share Scheduler for OpenNebula (ONE), will address your issues!

FaSS is a product of the INDIGO-DataCloud project and has been developed to boost small Cloud infrastructures, like those used for scientific computing, which often operate in a saturated regime: a condition that constrains the free auto-scaling of applications. In those cases, tenants typically pay a priori for a fraction of the overall resources and are assigned a fixed quota accordingly. Nevertheless, they might want to be able to exceed their quota and to profit from additional resources temporarily left unused by other tenants. Within this business model, one definitely needs an advanced scheduling strategy.

What FaSS does is to satisfy resource requests according to an algorithm that prioritises tasks according to

- an initial weight;

- the historical resource usage of the project.

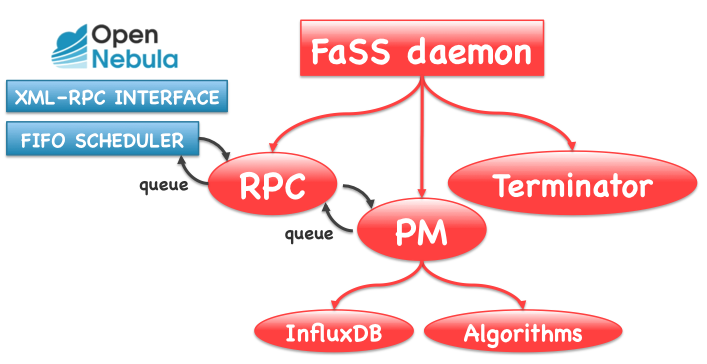

Software design

The software was designed to be as little intrusive as possible in the ONE code, and interacts with ONE exclusively through its XML-RPC interface. Additionally, the native ONE scheduler is preserved for matching requests to available resources.

FaSS is composed of five functional components: the Priority Manager (PM), a set of fair-share algorithms, Terminator, the XML-RPC interface and the database.

- The PM is the main module. It periodically requests the list of pending Virtual Machines (VMs) to ONE and re-calculates the priorities in the queue by interacting with an algorithm module of choice.

- The default algorithm in FaSS v 1 is Slurm’s MultiFactor.

- Terminator runs asynchronously with respect to the PM. It is responsible for removing from the queue VMs in pending state for too long, as well as terminating, suspending or powering-off running VMs after a configurable Time-to-Live.

- The XML-RPC server of FaSS intercepts the calls from the First-In-First-Out scheduler of ONE and sends back the reordered VMs queue.

- FaSS database is InfluxDB. It stores the initial and recalculated VM priorities and some additional information for accounting purposes. No information already present in the ONE DB is duplicated in FaSS.

How can I install FaSS?

Please find the detailed instructions in GitHub.

The only prerequisites are:

- To install ONE (versions above 5.4 operate with FaSS above v1.2, if you run a previous version of ONE you need FaSS v1.1 or before);

- To install InfluxDB and create fassdb.

All the other requested packages are installed automatically with the rpm.

You can then install FaSS as root user:

$ cd /tmp/

$ git clone https://github.com/indigo-dc/one-fass

$ cd one-fass

$ cd rpms

$ yum localinstall one-fass-service-v1.3-1.3.x86_64.rpm

The last step is to adjust the configuration file of the ONE scheduler, to allow it to point at the FaSS endpoint instead in:

/etc/one/sched.conf

change:

ONE_XMLRPC = "http://localhost:2633/RPC2"

to:

ONE_XMLRPC = "http://localhost:2637/RPC2"

Is it difficult to use?

Not at all! A detailed usage description can be found in GitHub.

- Edit the initial shares for every user:

$ cd /tmp/one-fass/etc

and edit the file:

shares.conf

- Start FaSS:

systemctl start fass

Now FaSS is ready and working!

Additional features

There are few additional features that allow you to keep your Cloud infrastructure clean:

- You can set your VMs to be dynamic and be terminated after a specific Time-to-Live instantiating with:

$ onetemplate instantiate <yourtemplateid> --raw static_vm=0

- Instead of terminating your VMs they can be powered-off, suspended or rebooted changing the action to be performed in:

/one-fass/etc/fassd.conf

What’s next?

We are implementing several new features in FaSS, for example the possibility of setting the Time-to-Live per user. We are also planning to test several new algorithms. So stay tuned!

0 Comments