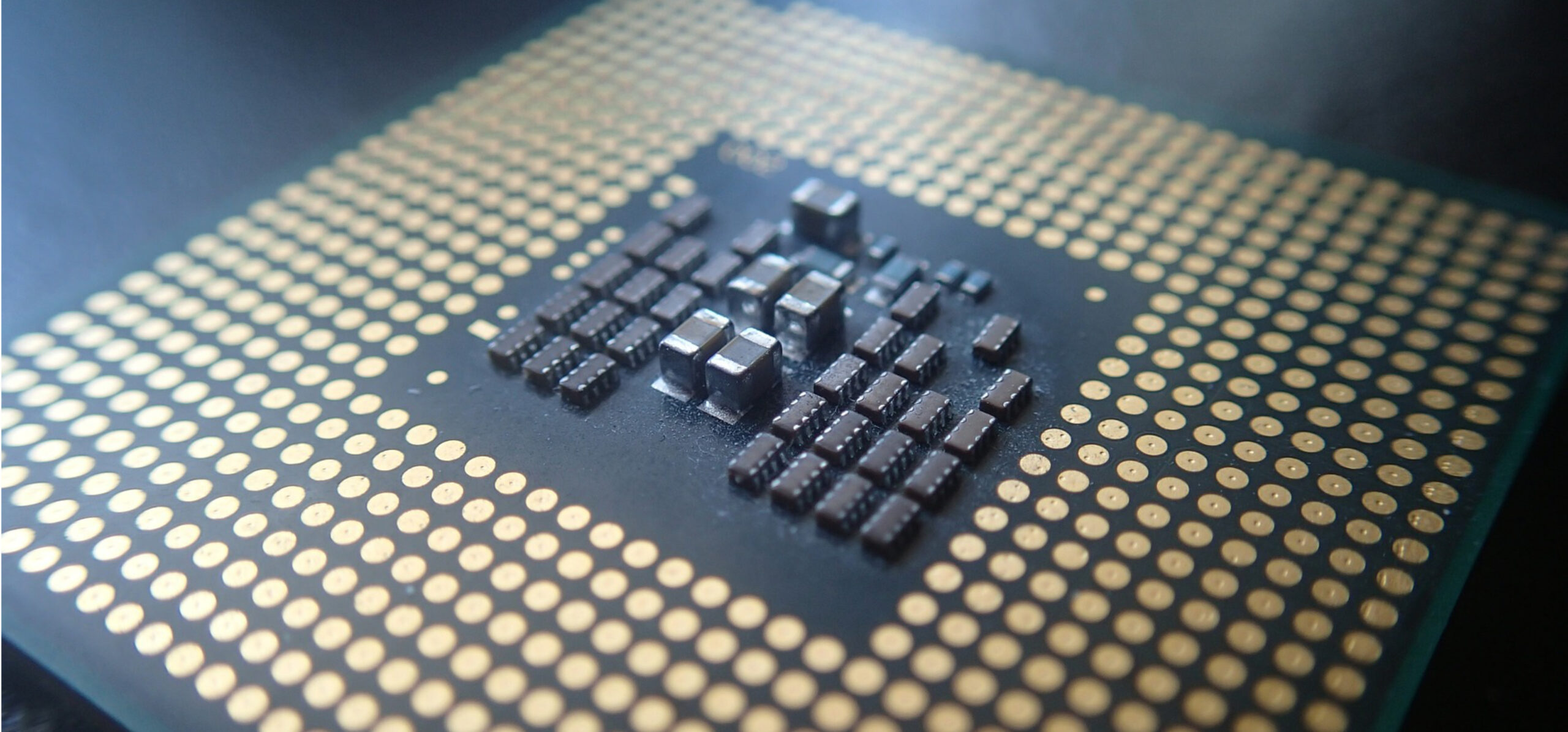

Sometimes it can be hard to choose a CPU model for VMs. There are many factors that can limit our options, like security or performance restrictions, or even the hardware you are using in your data center.

In this post, I’d like to share my approach for selecting a CPU model for KVM x86 hosts, and some tips on how to deal with the possible restrictions you might face during that process.

Selecting a base CPU model

The first step is always to find the closest QEMU CPU model that fits our hardware. To do so, we can use the virsh -c qemu:///system capabilities command. You can see below the output when executed on a laptop:

<capabilities>

<host>

<uuid>4c4c4544-0043-5710-8059-b4c04f334c32</uuid>

<cpu>

<arch>x86_64</arch>

<model>Broadwell-noTSX-IBRS</model>

<vendor>Intel</vendor>

<microcode version='180'/>

<counter name='tsc' frequency='1799996000' scaling='no'/>

<topology sockets='1' cores='4' threads='2'/>

<feature name='vme'/>

<feature name='ds'/>

<feature name='acpi'/>

...

By checking /host/cpu/model we can get the closest QEMU CPU model that fits the hardware of our host, in this case Broadwell-noTSX-IBRS. If all your hosts have the same CPU, you can use this CPU model straightaway.

Note that the CPU model is retrieved automatically during the host monitoring process and stored in KVM_CPU_MODEL 😉

You may want to group servers by CPU model in different OpenNebula clusters and then use the virtual CPU model closest to the hypervisor model. However, this might not always be an option. In that case, just repeat the process described above to find out which QEMU CPU models fits your hardware specs.

Once you have your server specs, follow QEMU docs to select the best QEMU model supported by all your hosts. This way you’ll make sure you are using the optimum model compatible with all of them while—and this is important—preserving the ability to live migrate VMs.

For example, imagine you have these three different CPU models: Skylake-Client-IBRS, Broadwell-noTSX-IBRS and Haswell-IBRS. According to the official documentation, the best QEMU model we’d have to choose in order to fit the specs of this heterogeneous datacenter would be Haswell-IBRS.

More info about how to obtain information about the capabilities of the virtualization host via the libvirt toolkit can be found here:

- Driver capabilities XML format: Host capabilities

- Application Development Guide: Capability information

Security and performance restrictions

Once you have selected a base QEMU CPU model, you need to check if it satisfies your restrictions. Here you can find out which features are exposed by each QEMU CPU model.

At this point, it is also quite sensible to check which security mitigations are enabled and which are left vulnerable inside the guest OS. For example, let’s check the content of /sys/devices/system/cpu/vulnerabilities

$ cd /sys/devices/system/cpu/vulnerabilities

$ grep . *

l1tf:Mitigation: PTE Inversion

mds:Vulnerable: Clear CPU buffers attempted, no microcode; SMT Host state unknown

meltdown:Mitigation: PTI

spec_store_bypass:Vulnerable

spectre_v1:Mitigation: usercopy/swapgs barriers and __user pointer sanitization

spectre_v2:Mitigation: Full generic retpoline, STIBP: disabled, RSB filling

We can see here that the CPU of this example is actually vulnerable to spec_store_bypass.

Another interesting way of examining the guest OS in search of CPU vulnerabilities is by running some Spectre checkers like https://github.com/speed47/spectre-meltdown-checker.

If you find out that you need to incorporate some security or performance feature to the CPU model you are using for your VMs, you can always add them by using the RAW section inside the VM template and leaving the field CPU_MODEL empty.

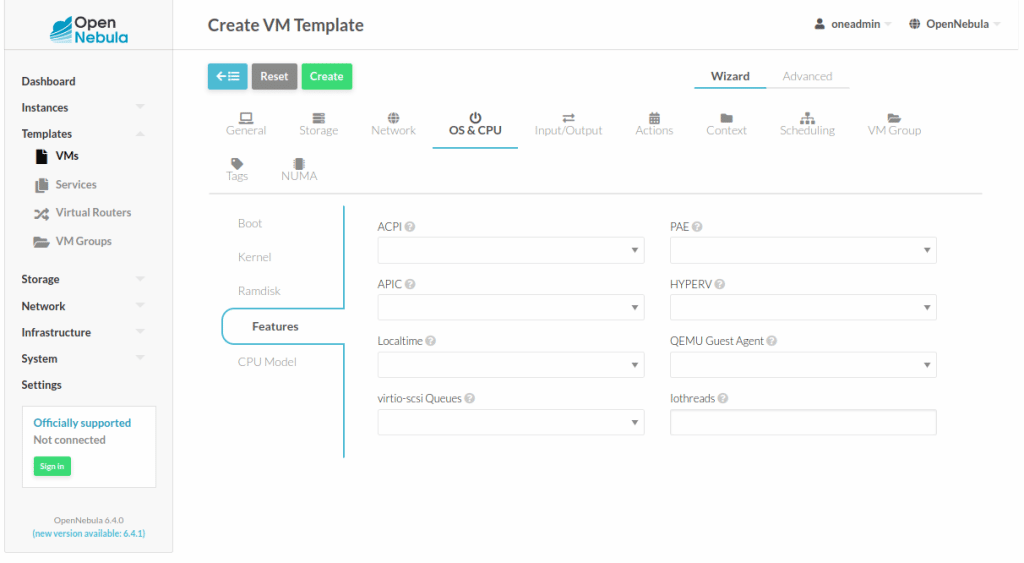

The CPU features are also fully integrated and can be easily enabled in sunstone under OS & CPU > Features section in the VM template. (See the below image for more information)

In case you would like to use a CPU feature that is not integrated within the OpenNebula interfaces, e.g pcid , you can use the following RAW section inside the VM template to enable it:

RAW = [

DATA = "<cpu mode='custom'><model

fallback='forbid'>$CPU_MODEL</model><feature name='pcid'

policy='require'/></cpu>",

TYPE = "kvm"

]

We can set this configuration as the one by default by modifying /etc/one/vmm_exec/vmm_exec_kvm.conf

You can find more information about important features of Intel x86 CPUs, like Spectre mitigations, right here.

Remember that a very convenient way to prevent cross-VM attacks based on the speculative nature of the processors is the use of the CPU pinning feature introduced in OpenNebula 5.10, which isolates a VM in a whole core using the core policy.

As always, don’t hesitate to send us your comments or questions!

0 Comments